I have a box with dual hard disks in it and I want to install RL on it with the disks in a spanned configuration. I’m a complete neophyte as far as partitions, LVM, etc, etc go. Is there a reference or tutorial explaining how to do this while doing a fresh install?

The official guide (you can also look at the earlier chapters)

I don’t know what “spanned” means, but it sounds dangerous, for example if a volume extends across both disks, and one fails, that could be bad.

This topic came up on Fedora Forum recently. The person was using a raid1 pairing and the reason was that if one drive failed the failed drive could be removed and the other drive would boot and continue on alone till a new replacement could be installed and the pairing resynced. Assuming that backups are also being done to another device is this a plausible approach? I don’t know. One thing I do know is that when an ssd goes bad it just dies, no limping, no warning.

“Spanned” tends to mean that a volume spans multiple disks. The trivial example is with LVM. A VG (volume group) has more than one PV (physical volume, a disk) and there is a LV (logical volume) in the VG. Part of the LV is in one disk and other part(s) on other disk(s).

The benefit of spanned volume is that you get larger volume. Say, two 4TB disks can host a 8TB volume.

The downside is that if any member disk breaks, then all data is lost. It might be possible to recover files that are entirely in one disk.

“Striped” spans too, but the writes are interleaved. (Not “first 4TB on A and second 4TB on B”.) Files smaller than stripe size are on one disk, but all bigger files are spread to both/all disks. The RAID 0 does stripe.

“Mirror”, for example RAID 1, writes everything to every disk. With those two 4TB disks you can get only 4TB volume, but data is redundant; every disk has full copy of data.

Can one set up in the interactive installer a VG that has multiple disks? I have no idea, but probably.

Single, large, “continuous” volume is convenient; you don’t need to consider what to put where. However, multiple separate volumes can be quite flexibly mounted to the tree; a “big disk” is not necessary if one can organize data.

So, I did some research and tried the lsblk command on boxes with varying disk configurations:

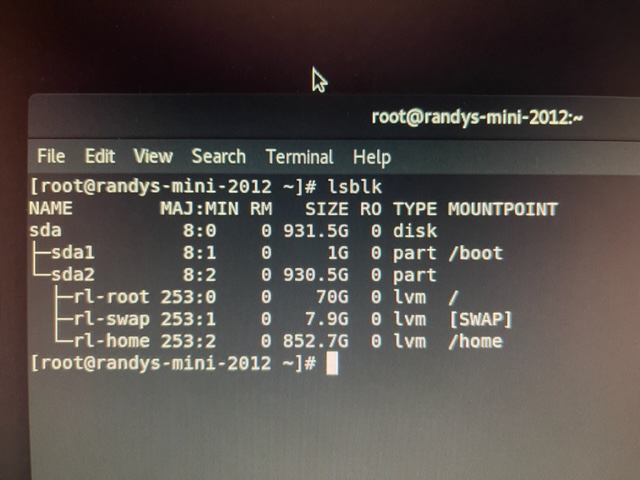

mini-2012 has a single 1TB disk and the output is as shown.

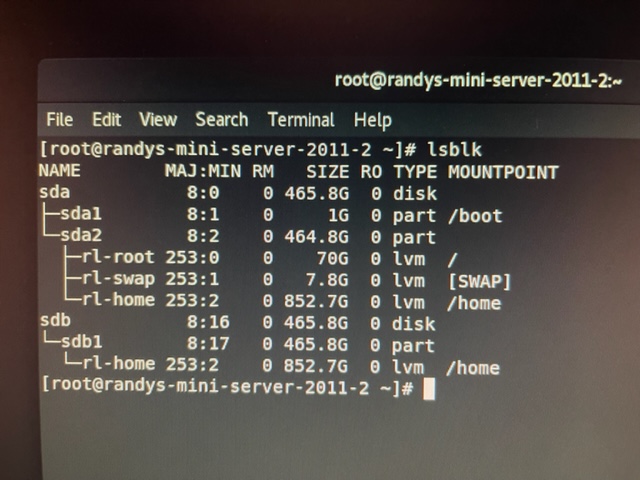

server-2011-2 has dual 500s and its lsblk output is as shown.

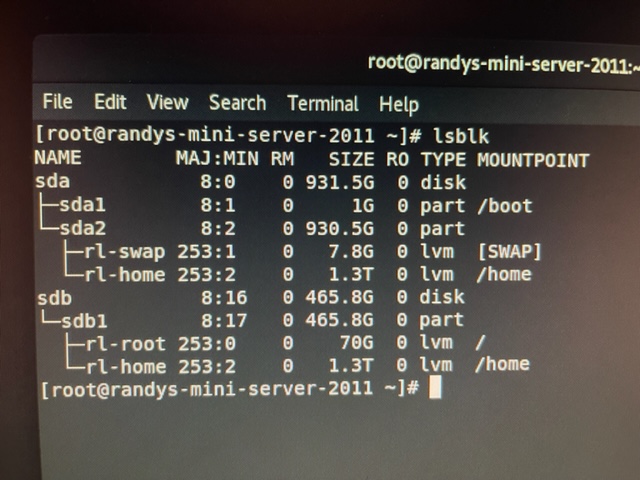

Lastly, server-2011 has a 1TB and a 500 and its output is shown. Apparently, on each system, there is a mount point /home which an LVM and is close to the combined size of the disks installed in each system. Am I seeing things, or did partitioning do what I was hoping to accomplish all by itself?

You have achieved your objective.

Sweet. Well in my use case anyhow. So the bottom line is that installing on a system with multiple disks and allocating all the storage and letting the installer handling partitioning automatically results in this kind of setup. A good thing to know whether you’re shooting for that or if you want it set up some other way.

I don’t think this is a good setup, the Volume Group (VG) called ‘rl’, is spanning across two disks, so what happens if one disk fails? The other problem is that you should really limit the size of /home, otherwise it will eat all the space, and you can’t shrink it.

With two disks, I’d normally put the o/s on /dev/sda, and then create two volume groups on /dev/sdb, ‘vgh’ for /home, and ‘vgd’ for /data. The purpose of /data is for everything else that’s not a homedir, such as web sites, databases, shares etc.

Since when can you not shrink an LVM? I have done it many times. True, there are limitations on how much you can shrink an LVM - without causing damage to data, but you can shrink an LVM. It is risky, but it can be done. Here is a useful link that I have used myself:

https://www.tecmint.com/extend-and-reduce-lvms-in-linux/

Using RAID0 is risky as jlehtone pointed out, if you lose one disk you lose all of the data in the RAID0 volume. RAID0 is meant for artificially creating larger “disk” which in reality are larger volume groups which can then be broken down into smaller logical chunks (LVMs).

Every non-redundant span has the risk. Only redundant arrays can tolerate some disk breakage.

When you put XFS into the LV. You can’t shrink LV that contains unshrinkable filesystem.

Not all filesystems are shrinkable. ext4 for instance is, XFS (which is the default) is not.

I thought I had done both a shrinking of an XFS and also ext4. I know I have grown both, perhaps that is where I am confused. Thanks for the clarification.

Why doesn’t XFS permit LVM shrinking, that is a silly design change.

XFS was implemented by SGI and is surprisingly old. The technical standards and related design paradigms might be different today, although XFS must have been quite good so that it was ported to linux.

That reminds me that I have a SGI Indy [1] lying around, which was running IRIX, a real UNIX with XFS as one of it’s filesystems. I used that some years ago as workstation approximately around the turn of the century, but switched soon back to Linux. It was not the most powerful computer made by SGI, but with decent graphics for that time and the IndyCam (a webcam). Not so many years later SGI went bankrupt.

[1] https://www.digibarn.com/collections/articles/sgi-indy/index.html

XFS does not shrink. That is not specific to LVM.

It is and is. See max file and filesystem sizes in Red Hat Enterprise Linux Technology Capabilities and Limits - Red Hat Customer Portal

We too had SGI/IRIX (as long as we could). SGI had 3D stereo graphics hardware, was involved in OpenGL and C++, etc. Them gone, out main fileserver – now Linux – had XFS before Red Hat adopted it.

Back then disks were “small”. Everybody had interest to get more, almost nobody was shrinking. When one could get more disks, the question – just like OP – was how to conveniently share that resource to users? One big volume is much more attractive than many small. XFS could be much larger volume than the ext*.

Extending filesystem is adding something to it. That is easier than deallocating, because deallocation requires to first move data away from blocks that will be deallocated. XFS metadata layout, whatever it is, lacks such feature. “Take what you can and give nothing back!”

XFS has project quota in addition to user and group quotas. That is awesome.

I remember working with Irix back in the mid-1990s myself. Their graphics were legendary back in those days. They were heavily used for flight simulators to train pilots. I never worked with the filesystems on the different Irix systems we had to use. I worked with Indigos and an O2 (I think that was the model); it’s been so long.